How time complexity of Hashmap get() and put() operation is O(1)?

This is the famous interview question for the beginners as well as for experienced, So Let's see what it is all about.

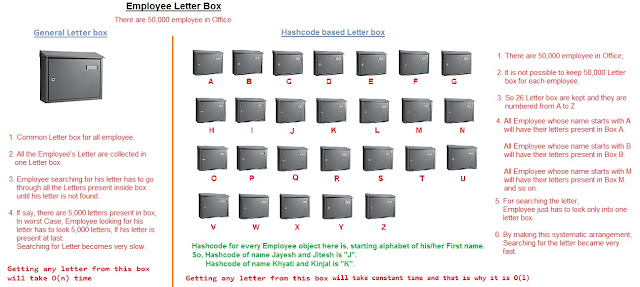

Hashmap is very popular data structure and found useful for solving many problems due to O(1) time complexity for both get and put operation. Before getting into Hashmap internals, Please read Hashmap basics and Hashcode.

Time complexity of Hashmap get() and put() operation.

For detail explanation on hashmap get and put API, Please read this post How Hashmap put and get API works.

Hashmap works on principle of hashing and internally uses hashcode as a base, for storing key-value pair.

With the help of hashcode, Hashmap distribute the objects across the buckets in such a way that hashmap put the objects and retrieve it in constant time O(1).

Before looking into Hashmap complexity, Please read about Hashcode in details.

Let's understand time complexity with the help of an example,

From the above example, it is clear that, put operation in hashmap requires,

Step 1. Computing hashcode of key,

Step 2. Calculating array index/bucket from hashcode and then,

Step 3. With the help of index calculated, directly jump to that index/bucket.

Step 4. Now, each and every element in the bucket is scanned sequentially to see, is there any

key-value pair present, which has the same key we are trying to put.

If key-value pair is found, which has same key then instead of storing

new entry / key-value pair, it simply replace value stored against key.

If no matching key is found then it will go till end of the list and create a new key-value

pair at the end.

So, only costly operation what we see here is iterating the linked list after getting the bucket or array index.

Hashmap works on principle of hashing and internally uses hashcode as a base, for storing key-value pair.

With the help of hashcode, Hashmap distribute the objects across the buckets in such a way that hashmap put the objects and retrieve it in constant time O(1).

Before looking into Hashmap complexity, Please read about Hashcode in details.

Let's understand time complexity with the help of an example,

From the above example, it is clear that, put operation in hashmap requires,

Step 1. Computing hashcode of key,

Step 2. Calculating array index/bucket from hashcode and then,

Step 3. With the help of index calculated, directly jump to that index/bucket.

Step 4. Now, each and every element in the bucket is scanned sequentially to see, is there any

key-value pair present, which has the same key we are trying to put.

If key-value pair is found, which has same key then instead of storing

new entry / key-value pair, it simply replace value stored against key.

If no matching key is found then it will go till end of the list and create a new key-value

pair at the end.

So, only costly operation what we see here is iterating the linked list after getting the bucket or array index.

If it has to iterate linked list, then how get and put operation is O(1)?

This question is perfectly valid. Hashmap put and get operation time complexity is O(1) with assumption that key-value pairs are well distributed across the buckets. It means hashcode implemented is good.

In above Letter Box example, If say hashcode() method is poorly implemented and returns hashcode 'E' always, In this case.

1. hashcode computed of Employee "Daniel" will be 'E', So all Letter of Daniel will end up in

Letter box marked "E".

Letter box marked "E".

2. hashcode computed of Employee "Jayesh" will be 'E', So all Letter of Jayesh will end up in

Letter box marked "E".

3. hashcode computed of Employee "Eric" will be 'E', So all Letter of Eric will end up putting in

Letter box marked "E".

Letter box marked "E".

3. hashcode computed of Employee "Eric" will be 'E', So all Letter of Eric will end up putting in

Letter box marked "E".

In this case how, hashmap will look like,

|

| what will happen if hashcode returns same value |

Since all letters are placed in one bucket, Put and Get operation will

no longer have time complexity of O(1) because put and get operation has to scan each letter inside the bucket for matching key.

no longer have time complexity of O(1) because put and get operation has to scan each letter inside the bucket for matching key.

In above case, get and put operation both will have time complexity O(n).

Hashmap best and average case for Search, Insert and Delete is O(1) and worst case is O(n).Hashcode is basically used to distribute the objects systematically, so that searching can be done faster.

|

| hashmap diagram |

We can say that item lookup inside bucket take expected O(1) time only under the assumptions that good hashcode() function is implemented,

Above line means to say that, If hashcode() function is written good then, hashcode generated will distribute the items across all the buckets and doesn't end up putting all item in one bucket.Say if we have 5 items and 5 bucket then good hashcode method will distribute each item in each bucket, this gives best complexity of O(1) as each bucket have only one item.

So look up required is only once.

Now, if we have 10 items and 5 bucket then good hashcode method will distribute 2 items in each bucket, In this case, number of items is doubled, still for searching any element it will require only 2 look up.

Now, if we have 20 items and 5 bucket then good hashcode method will distribute 4 items in each bucket, In this case, number of items is doubled, still for searching any element it will require only 4 look up. So far, so good.

Now, if we have 40 items and 5 bucket then good hashcode method will distribute 8 items in each bucket, In this case, number of items is doubled, still for searching any element it will require only 8 look up.

Now, performance is little bit degraded.

Now, if we have 80 items and 5 bucket then good hashcode method will distribute 16 items in each bucket, In this case, number of items is doubled, still for searching any element it will require only 16 look up.

Now, performance is little bit degraded.

But, if you observe, as the number of items are doubled, elements that need to be searched within bucket, that is the number of look ups are not increasing very high and remain almost constant compared to number of items increased.

That is why it is called that hashmap's get and put operation takes O(1) time.

To be very precise, The amortized/average case performance of Hashmap is said to be O(1) for put and get operation.

Remember, hashmap's get and put operation takes O(1) time only in case of good hashcode implementation which distributes items across buckets.In case of poor hashcode implementation, chances are there, that all elements get placed in one bucket and hashmap look like below,

|

| Hashcode collision in Hashmap in Java |

Put operation has to look into each key-value pair in bucket to see matching key is present,

If present then it needs to replace the value where key is matched.

If not present, then insert new key-value pair at end of link list.

This make put operation in worst case as O(n).

Get operation has to do linear search in bucket for Key look up, since all key-value pair are placed in one bucket, and hence complexity of get operation in worst case will be O(n).

This is why it's important to design good hash functions.

You may also like to see

What is Hashmap data structure? What is the need of Hashmap? How Hashmap data structure works in Java? What is Hashcode? Can 2 objects have same hashcode?

How Hashmap data structure works internally? How hashcode and equals method is used in hashmap? Explain with real time example.

What is Load factor and Rehashing in Hashmap?

When get/put method of Hashmap goes to Infinite loop in HashMap?

Multithreading Interview Question Answers In Java

How ConcurrentHashMap works and ConcurrentHashMap interview questions

How Much Water Can A Bar Graph with different heights can Hold

Detect loop in linked list.

0/1 Knapsack Problem solved using Iterative and Dynamic Programming

Maximum Sum Contiguous Subarray using Kadane's algorithm.

Enjoy !!!!

If you find any issue in post or face any error while implementing, Please comment.

Post a Comment